Facebook whistleblower highlights toxic pattern in social media

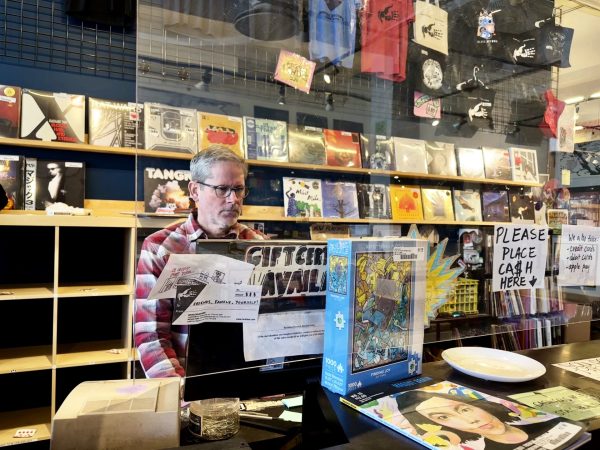

SOLEN FEYISSA | WIKIMEDIA COMMONS

Facebook app servers (Facebook, Instagram, WhatsApp, Messenger) all went down for hours on Oct. 4.

Earlier this month, a former Facebook employee named Frances Haugen revealed privileged information about the social media giant on CBS’s “60 Minutes”. On the show, Haugen brought to light multiple internal documents showing what she claimed was malpractice by the company. The documents showed that Facebook’s management team had been aware for years of many of the negative consequences of the algorithms they use to give content recommendations and, most importantly to the company, to feed sponsored content to their users.

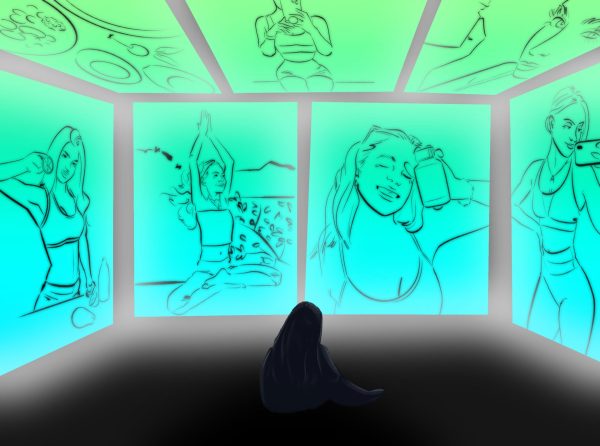

Some of the documents in the report also show that Facebook executives had access to internal research showing that one of their subsidiaries, Instagram, had produced negative mental health outcomes in teenage girls who became image-obsessed as a result of frequent use of the app. The documents also reiterated that Facebook’s problems are not just limited to the United States, showing that the company was aware that its platforms were primary communication centers for ethnic violence and hate speech against Muslims in Sri Lanka and Myanmar, countries where Facebook invests very little in content moderation, even though almost everyone who is connected to the internet uses Facebook as their main communication platform in both nations.

These are only a few examples, and they are certainly indicative of a problem in the way we interact with social media in our day-to-day lives, and many people feel Facebook has a negative effect on our society as a whole.

“I totally believe Facebook has had a negative impact on society. Just look at the last presidential election and all of the uncertainty surrounding it because of misinformation and disinformation on social media,” said Brian Wright, a senior in DePaul’s College of Communication.

When all was said and done, Haugen copied over 10,000 of these documents from Facebook’s internal research and communications. This is certainly an impressive operation for one person to do covertly at one of the largest corporations in the world, but what did we really learn that the public hasn’t been telling Facebook for years now?

In her interview with “60 Minutes,” Haugen stated, “The thing I saw at Facebook over and over again was there were conflicts of interest between what was good for the public and what was good for Facebook. And Facebook, over and over again, chose to optimize for its own interests, like making more money.”

The question that must be raised is one of power and corporate responsibility. How much power does Facebook really have over our daily lives? Is that power real or manifested? And what broader responsibilities does Facebook, a publicly traded corporation, have to the general public besides making money for their shareholders? Many people would like to believe that Facebook is some kind of social public utility the government can regulate as a response to this whistleblower complaint, or they would like to see the government regulate them similarly to the big tobacco lobby after their own whistleblower expose on “60 Minutes” in the 1990s.

Unfortunately, this may not be as apt of a comparison as many of us would like, because the nature of a company like Facebook is far different than that of big tobacco or a public utility, and the company’s international activity and user base make it very difficult to regulate.

“The reality here is that these are now multinational corporations and that makes them very difficult for the U.S. government to regulate on its own. Also, these services have become an integral part of many people’s personal and professional lives, so a regulatory change would be very difficult to achieve,” Samantha Close, DePaul professor of communication studies, told The DePaulia.

So where does that leave us? We understand that there is a problem with Facebook and other related media companies with vitriolic political speech and hate speech, and we also understand that the algorithms which filter content on these platforms serve to promote such content because it is divisive and creates a whole lot of “Meaningful Social Impressions” in the comments (A.k.a ranting political fights).

Though we must also consider the benefits of these services and algorithms as well, such as a small family business being able to sell and scale its products across the country, or people being led to social group pages that align with their interests, perhaps making them feel less alienated in the world and giving them a sense of community.

The bottom line is, Facebook and its associated apps and algorithms are not going anywhere anytime soon, and because of the international scope of the company and the public good that it provides despite many observable negative outcomes, we cannot rely on Congress to enforce any meaningful change upon the company and the industry as a whole. That change must come from inside, but that will only happen with pressure applied from us, the general public, and not just pressure on Facebook, but on their biggest advertisers as well.

If whistleblower Frances Haugen is correct that Facebook will always prioritize its profits over the public good, then it is incumbent upon all of us as everyday citizens to push for incremental changes by taking actions such as buying less from their marketplace, getting our news and information from alternative news feeds (Apple News, Flipboard, etc), and by having our “Meaningful Social Interactions” on Facebook/Instagram pages that promote positive content (Tanks Good News, UpWorthy, etc). At the end of the day, it is only our actions that will be able to show Facebook that they can only make profits if they align those profits for the public good.